The EU Council and Parliament have proposed delaying the full application of the EU AI Act for high-risk AI-based medical devices by 12 months to 2 August 2028.

This is part of the Digital Omnibus on AI proposal to address delays in technical standards and ensure alignment with the Medical Devices Regulation (MDR) and the In Vitro Diagnostic Regulation (IVDR) timelines.

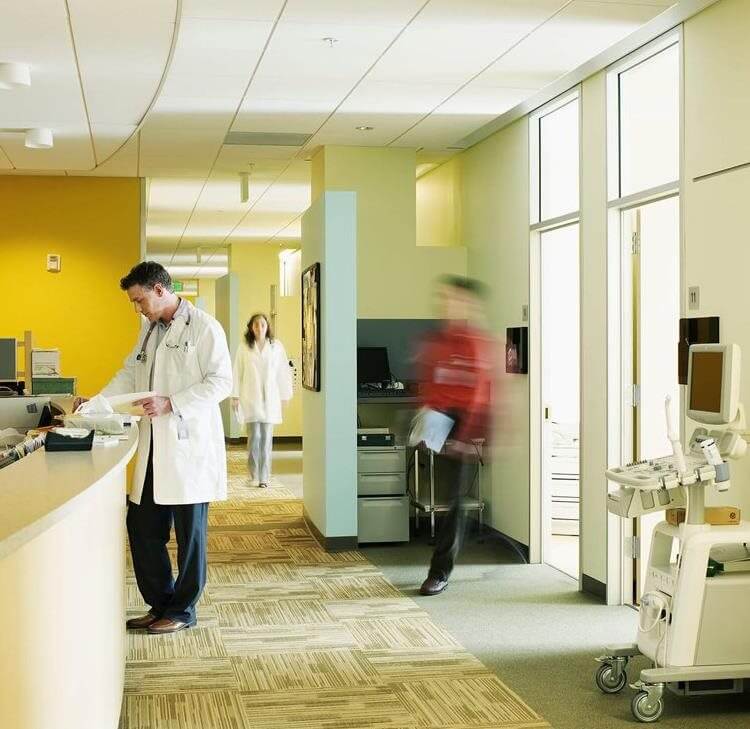

AI-based medical devices classified as high-risk under the EU AI Act include AI software used for diagnosis, monitoring critical physiological processes and supporting clinical decisions.

Commenting on the proposal, Gerard Hanratty, Partner and Head of Health and Life Sciences at UK and Ireland law firm Browne Jacobson, said: “The delay is necessary because the harmonised standards companies would need to comply with simply don't exist yet, so the previous timeline is unworkable.

“Whether 2028 is enough time will depend entirely on how quickly those standards materialise. Companies shouldn't treat this as breathing space. The direction of travel is clear and they need to be getting their houses in order now.

Complex matrix of compliance should be avoided in medical devices

“The instinct is right and a sensible approach. If you create a complex, overlapping compliance matrix, companies will simply seek authorisation somewhere else – somewhere faster and less burdensome – and Europe loses its grip on the technology.

“The EU is learning from issues which have arisen with the implementation of the GDPR: rigidity that was designed to protect ends up creating barriers to the very innovation you're trying to govern.

“Reducing the overlap with the MDR helps, but there are still too many layers – MDR, AI Act, national variations on standards – and that needs to be resolved.

Post-market surveillance should be priority for regulators

“The regulatory emphasis needs to shift. For AI that learns and adapts, what really matters is what happens after the product is on the market – is the algorithm still doing what it's supposed to do? Is it behaving in ways nobody anticipated?

“We should be putting our energy into robust post-market surveillance rather than trying to second-guess every conceivable risk before authorisation. Pre-market scrutiny has its place, but too many front-end requirements will delay patient access and potentially push companies to other jurisdictions.

What the UK can learn from EU’s move

“If the compliance environment stays too complicated and too slow, some of the most innovative products simply won't come to Europe first – or at all. That's bad for the industry and bad for patients.

“The changes being discussed move in the right direction, but there's a broader point here for the UK as well. As Britain develop its own approach to AI in medtech, it must make sure it doesn’t layer new obligations on top of existing ones without thinking through the cumulative effect.

“A streamlined, internationally aligned system is what attracts investment and gets innovative technology to patients. The EU is course-correcting. The UK should be learning from it.”

Contact

Dan Robinson

PR & Communications Manager

Dan.Robinson@brownejacobson.com

+44 0330 045 1072